For decades, the concept of a “smart car” was largely confined to science fiction. We imagined vehicles that could not only transport us but also understand us, anticipate our needs, and become true partners on the road. Today, that fiction is rapidly becoming our reality. The era of simple voice commands for dialing a number or changing the radio station is drawing to a close. We are now witnessing the dawn of a new age, marked by the arrival of next-generation in-car personal assistants. These are not mere features; they are sophisticated, AI-driven co-pilots, fundamentally transforming the driving experience from a solitary task into a seamlessly connected, intuitive, and highly personalized journey.

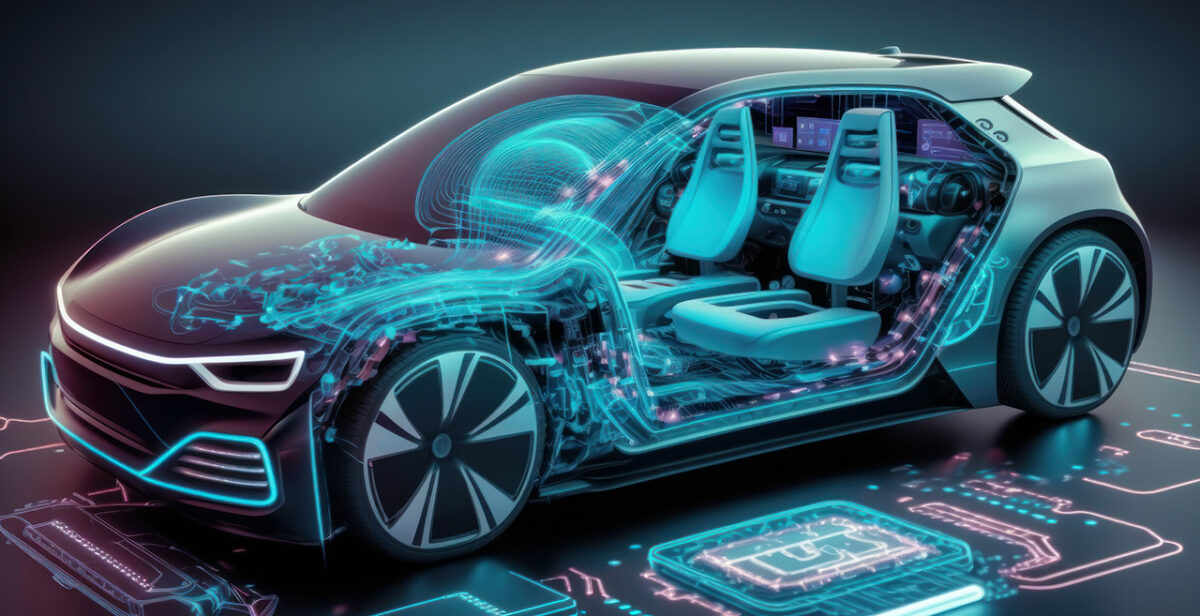

This revolution is powered by a convergence of technologies: generative artificial intelligence, vast cloud computing resources, advanced natural language processing, and the burgeoning ecosystem of the Internet of Things (IoT). The result is a shift from reactive command systems to proactive, contextual, and empathetic digital companions. This article will provide an in-depth exploration of this technological leap, examining the core technologies, the key players, the profound benefits, the significant challenges, and the thrilling future that lies just beyond the horizon.

A. From Simple Commands to Conversational Companions: The Evolutionary Leap

To truly appreciate the sophistication of today’s in-car assistants, it’s essential to understand their humble beginnings. The first iterations were rudimentary, built on a foundation of limited, pre-programmed phrases and commands.

The First Generation: Rule-Based Systems

Early systems, like the initial versions of Ford’s SYNC or BMW’s iDrive, operated on a strict “if-then” logic. The driver had to use specific, often awkward, syntax for the system to understand. Saying “Call John Mobile” might work, while “Phone my husband John on his cell” would result in a frustrating error message. These systems had no memory, no context, and no ability to learn. They were tools, and clumsy ones at that, requiring the driver to adapt to the machine’s limitations.

The Smartphone Integration Era

The next phase involved integrating smartphones, primarily through Apple CarPlay and Android Auto. This was a significant step forward, as it brought the power of Siri and Google Assistant into the dashboard. While this improved the user interface and access to apps, it was still an extension of the phone. The car itself remained a relatively dumb vessel, and the experience was often siloed, with the car’s native functions (like climate control or seat massage) existing in a separate digital universe from the smartphone’s capabilities.

The Current Revolution: Generative AI and Large Language Models (LLMs)

The breakthrough that has catalyzed the “next-gen” label is the integration of generative AI and Large Language Models (LLMs), such as the technology underpinning OpenAI’s ChatGPT. Unlike their predecessors, these models are trained on colossal datasets, enabling them to understand natural, conversational language, grasp context, and generate human-like responses. They don’t just listen for keywords; they comprehend intent. This foundational shift is what separates a simple voice command system from a true in-car personal assistant.

B. The Core Technologies Powering the Next-Gen Driving Experience

The advanced capabilities of these new assistants are not the product of a single invention but a symphony of interconnected technologies working in harmony.

A. Generative AI and Large Language Models (LLMs): The Brain

At the core of these systems is a generative AI model. This is the “brain” that processes complex requests, manages multi-step tasks, and engages in fluid dialogue. For example, instead of giving separate commands like “Navigate to the city center” and then “Find Italian restaurants,” a driver can simply say, “I’m feeling like authentic Italian food in the city center, find a highly-rated place and navigate me there, and see if they have any specials tonight.” The AI can parse this entire request, execute a web search for reviews, cross-reference with navigation, and even pull information from the restaurant’s website, all within a single, conversational interaction.

B. Multimodal Sensing and Sensor Fusion: The Senses

A next-gen assistant is not just an auditory entity; it is a multimodal being. It leverages a suite of sensors to build a comprehensive understanding of the vehicle’s internal and external environment.

-

Cameras: Interior cameras can monitor driver alertness, detect gaze direction (e.g., if the driver looks at a specific landmark, the assistant can offer information about it), and even recognize occupants to adjust personalized settings automatically.

-

Microphone Arrays: Advanced noise-cancellation and beamforming technology allow the assistant to isolate the driver’s voice from passenger conversations, road noise, and music, ensuring accurate pickup even in noisy conditions.

-

Biometric Sensors: Steering wheel or seat sensors can monitor heart rate and galvanic skin response, potentially allowing the assistant to detect driver stress or fatigue and suggest taking a break or playing calming music.

-

Vehicle Telematics: The assistant has constant access to vehicle data fuel level, battery charge, engine temperature, tire pressure allowing it to proactively suggest actions like routing to a charging station or a gas station.

C. Edge Computing and 5G Connectivity: The Nervous System

For an AI assistant to be responsive and low-latency, it cannot rely solely on cloud processing. A delayed response when asking for directions is not just annoying; it can be dangerous. This is where edge computing comes in. Basic processing and command execution happen locally within the car’s computer (the “edge”), ensuring instant responses for critical functions. Meanwhile, the high bandwidth and low latency of 5G connectivity enable the car to offload more complex tasks to the cloud in near real-time, such as processing complex natural language queries or downloading high-definition map updates on the fly.

D. Personalization Engines and User Profiles: The Memory

True personalization is a hallmark of a next-gen assistant. Using machine learning, the system builds a detailed profile for each user. It learns your schedule, your music preferences, your favorite destinations, and even your driving habits. If you always stop for coffee on your morning commute, the assistant will soon learn to suggest your usual order as you approach the café. If you prefer a specific cabin temperature and seat position, it will adjust automatically upon recognizing you. This creates a deeply tailored experience that makes the car feel uniquely yours.

C. A Deep Dive into the Major Players and Their AI Strategies

The race to dominate the in-car AI space is intensely competitive, with tech giants and automakers forming strategic alliances and developing proprietary solutions.

A. Mercedes-Benz and MBUX with ChatGPT Integration

Mercedes-Benz made headlines by being one of the first to integrate ChatGPT into its MBUX infotainment system. This move supercharged the existing “Hey Mercedes” voice assistant. The integration allows for vastly more natural conversations and the ability to handle complex queries that go beyond the car. Drivers can ask for detailed explanations of historical landmarks they are passing, request a recipe for dinner, or summarize the latest news headlines, all while keeping their hands on the wheel and eyes on the road.

B. Google’s Built-In and Android Automotive OS

Google is moving beyond the phone-projection model of Android Auto with its “Google Built-In” platform, which runs on Android Automotive OS. This is a native operating system embedded directly into the vehicle, as seen in brands like Polestar, Volvo, and General Motors. The Google Assistant is deeply integrated, controlling virtually every vehicle function. Its strength lies in its seamless connection to the Google ecosystem Maps, Calendar, Play Music, and your personal Google account providing a holistic and predictive experience. It can automatically suggest departure times based on calendar appointments and current traffic, for instance.

C. Apple’s Next-Generation CarPlay and Siri Evolution

Apple is taking a similarly ambitious approach with its next-generation CarPlay, announced to be far more deeply integrated than the current version. It aims to take over not just the central screen but also the instrument cluster, controlling climate settings, radio, and other vehicle functions. With enhanced Siri capabilities and deeper access to vehicle data, Apple is positioning its assistant as the central hub for both information and vehicle control, promising a unified experience for its massive user base.

D. Amazon’s Alexa Custom Assistant and Alexa Auto

Amazon is leveraging its dominance in the smart home with Alexa Auto. The strategy is to extend the Alexa ecosystem into the car, allowing users to control their home devices (lights, thermostat, security cameras) from the road and vice-versa. Furthermore, with Alexa Custom Assistant, Amazon provides the underlying technology for automakers to build their own branded assistants, powered by Alexa’s AI. This allows companies like Stellantis to create a unique experience without building the AI from scratch.

E. Chinese Tech Giants: Baidu’s DuerOS and Xiaomi’s HyperMind

The Chinese market is a hotbed of innovation in this field. Baidu, a leader in AI and autonomous driving, has deployed its DuerOS assistant widely. It is known for its exceptional natural language understanding of Chinese dialects and its deep integration with local services. Similarly, Xiaomi’s recent entry into the automotive sector with the SU7 features its “HyperMind” assistant, which claims to learn from user behavior and proactively trigger scenarios, like automatically lowering the windows when you pull up to your favorite coffee shop’s drive-thru, based on learned patterns.

D. The Tangible Benefits: How Next-Gen Assistants Enhance Every Journey

The move to advanced in-car AI is not about technological boasting; it delivers concrete, meaningful benefits that enhance safety, convenience, and enjoyment.

A. Enhanced Safety Through Reduced Cognitive Load

The primary safety benefit is the significant reduction of driver distraction. By allowing the driver to control complex functions through natural voice, they can keep their hands on the wheel and eyes on the road. There’s no need to fumble with touchscreens or buttons to adjust the climate, find a new playlist, or send a message. The assistant handles it all through conversation, minimizing the time the driver’s attention is diverted from the critical task of driving.

B. Unprecedented Convenience and Proactive Support

These assistants shift the paradigm from reactive to proactive. They don’t just wait for commands; they anticipate needs.

-

Smart Routing: The system might suggest leaving 15 minutes early for an appointment due to unexpected traffic, and it can automatically reserve a parking spot at your destination.

-

Predictive Comfort: It can pre-condition the cabin temperature based on the weather forecast and your personal schedule.

-

Seamless Errands: It can handle complex, multi-stop journeys, like “Find a gas station, then a coffee shop, and then take me to work,” optimizing the route for efficiency.

C. Deep Personalization for Every Occupant

The car becomes an extension of your digital life. Profiles ensure that every driver or frequent passenger experiences a environment tailored specifically to them from seat position and mirror angles to favorite podcasts and navigation preferences. This is especially valuable in multi-driver households or for shared mobility services.

D. A New Frontier for In-Car Commerce and Services

The assistant opens up new avenues for commerce, often referred to as the “connected car economy.” With secure payment integration, drivers can effortlessly pay for fuel, parking, food orders, or even tolls directly through the car’s interface, using only their voice. The assistant can suggest and facilitate transactions based on context, like ordering your usual coffee as you approach the café.

E. Enhanced Vehicle Health and Maintenance Management

By continuously monitoring vehicle data, the assistant can provide proactive maintenance alerts. It can go beyond a simple “check engine” light to explain the potential issue in plain language, suggest the severity, and even help schedule a service appointment at a nearby dealership, all integrated into your calendar.

E. Navigating the Roadblocks: Challenges and Ethical Considerations

Despite the immense promise, the widespread adoption of next-gen in-car assistants is not without its significant challenges and ethical dilemmas.

A. Data Privacy and Security: The Elephant in the Room

A car with a always-listening, sensor-rich AI assistant is a data collection powerhouse. The amount of personal data it gathers from your location history and driving habits to your conversations and even biometric data—is staggering. This raises critical questions: Who owns this data? How is it stored and used? Could it be sold to third parties or used by insurance companies to adjust premiums? A major data breach of a car manufacturer’s servers could have catastrophic privacy implications. Robust, transparent data policies and state-of-the-art cybersecurity are non-negotiable.

B. The Risk of Over-Reliance and Automation Complacency

As assistants become more capable, there is a risk that drivers may become over-reliant, trusting the AI to handle tasks it is not designed for. This is particularly dangerous in the context of semi-autonomous driving, where a driver might assume the AI is monitoring the road more effectively than it actually is. Maintaining driver engagement and ensuring the human remains the final authority is a critical design and educational challenge.

C. The Digital Divide and Feature Accessibility

Many of these advanced features will likely be offered as subscription services or reserved for premium vehicle trims. This could create a “digital divide” on the roads, where only wealthier consumers have access to the safety and convenience benefits of advanced AI, potentially widening existing inequalities.

D. The Complexity of Human-Machine Interaction (HMI)

Designing an intuitive and non-distracting interface for such a powerful system is incredibly difficult. If the voice assistant misunderstands commands frequently or the visual feedback is confusing, it can become a source of frustration and distraction, negating its core benefits. The HMI must be meticulously designed to be simple, reliable, and secondary to the act of driving.

F. The Future Horizon: What Comes After the Assistant?

The evolution of the in-car assistant is inextricably linked to the development of autonomous driving. In a fully self-driving car, the role of the assistant transforms completely. It ceases to be a “driving assistant” and becomes a “living space concierge.” It will be tasked with managing productivity, entertainment, and comfort. You could have a business meeting, watch a movie, or play a game with the AI facilitating the experience. Furthermore, we will see the emergence of Emotional AI, where systems can detect driver emotion through voice tone and facial expression and respond appropriately playing uplifting music if it senses sadness or suggesting a calming break if it detects anger or stress.

Conclusion

The arrival of next-generation in-car personal assistants marks a pivotal moment in automotive history. We are transitioning from seeing the car as a mere mode of transportation to embracing it as a intelligent, connected, and deeply personal space. These AI co-pilots, powered by generative AI and a web of sensors, promise a future of safer, more convenient, and more enjoyable journeys. However, this future must be built on a foundation of robust ethical standards, unwavering commitment to privacy, and human-centric design. The road ahead is as much about navigating these complex challenges as it is about harnessing the technology itself. One thing is certain: the relationship between human and machine has forever changed, and the dashboard of the future will be the command center for an experience far richer than just getting from point A to point B.